|

New ZYLIA Streaming Application allows you to set up binaural and Ambisonics audio streaming on your Mac computer with no need off using Digital Audio Workstation. It’s FREE for all current ZYLIA Ambisonics Converter owners. So available for users of the ZYLIA PRO Ambisonics and ZYLIA PRO Have it all! sets.

How can you use 3D audio streaming?

To set up binaural and Ambisonics audio streaming you will need the following prerequisites:

Learn everything about ZYLIA Streaming Application with the following video presentation: In the video we cover:

0 Comments

We are happy to announce the new release of the ZYLIA Streaming Application (v1.0.1).

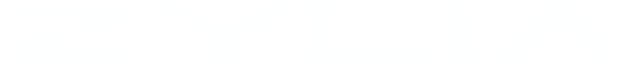

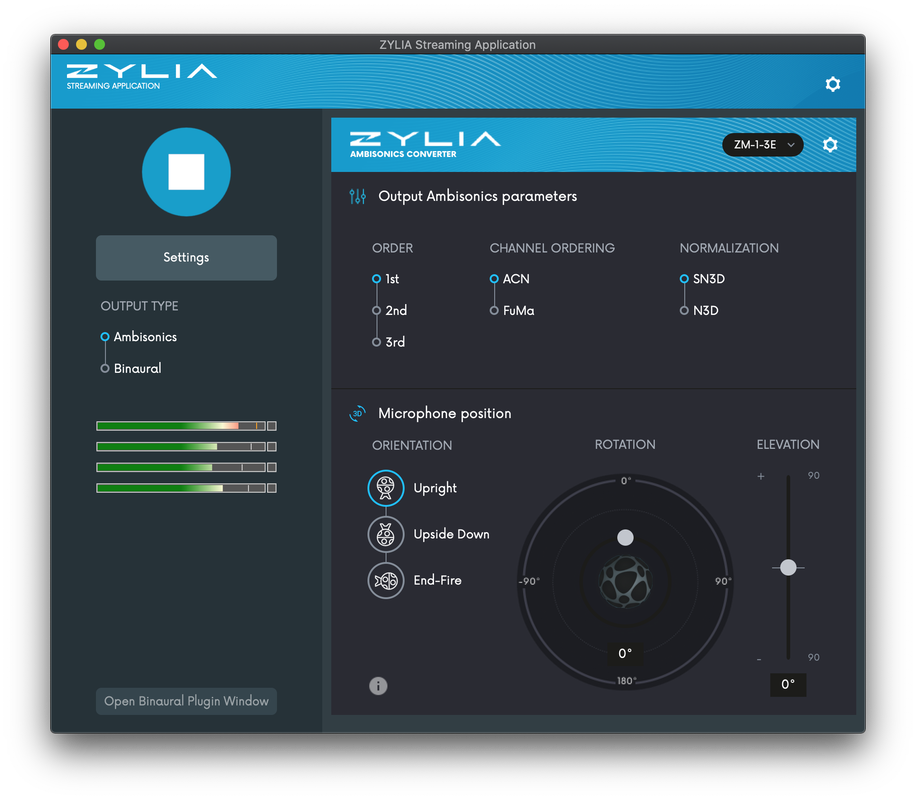

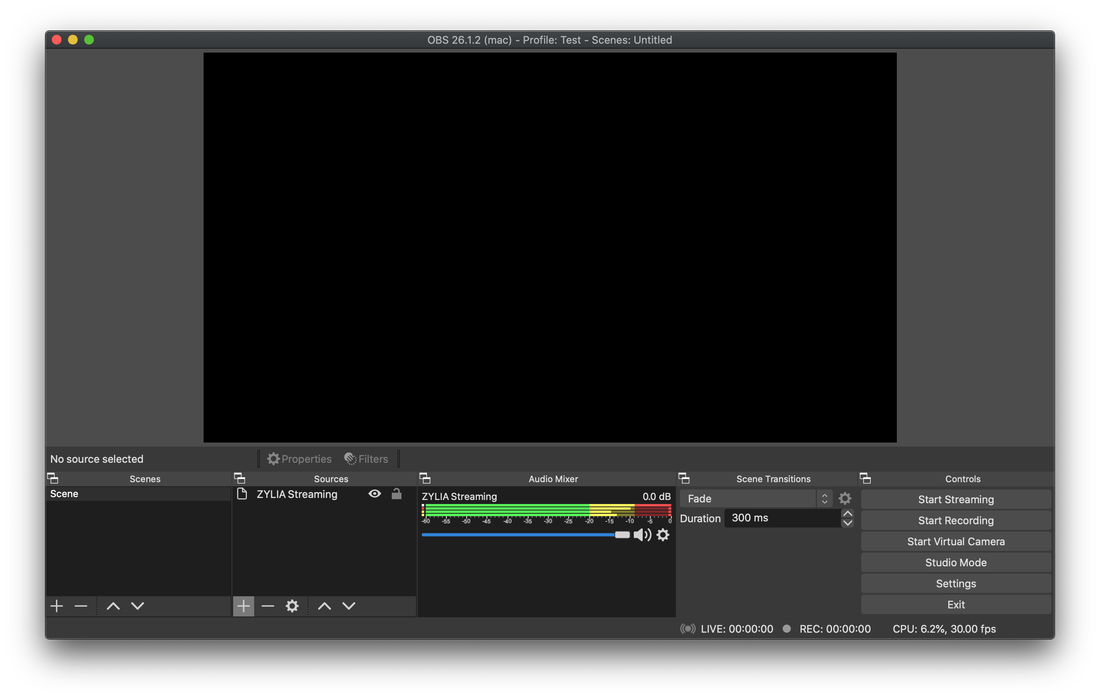

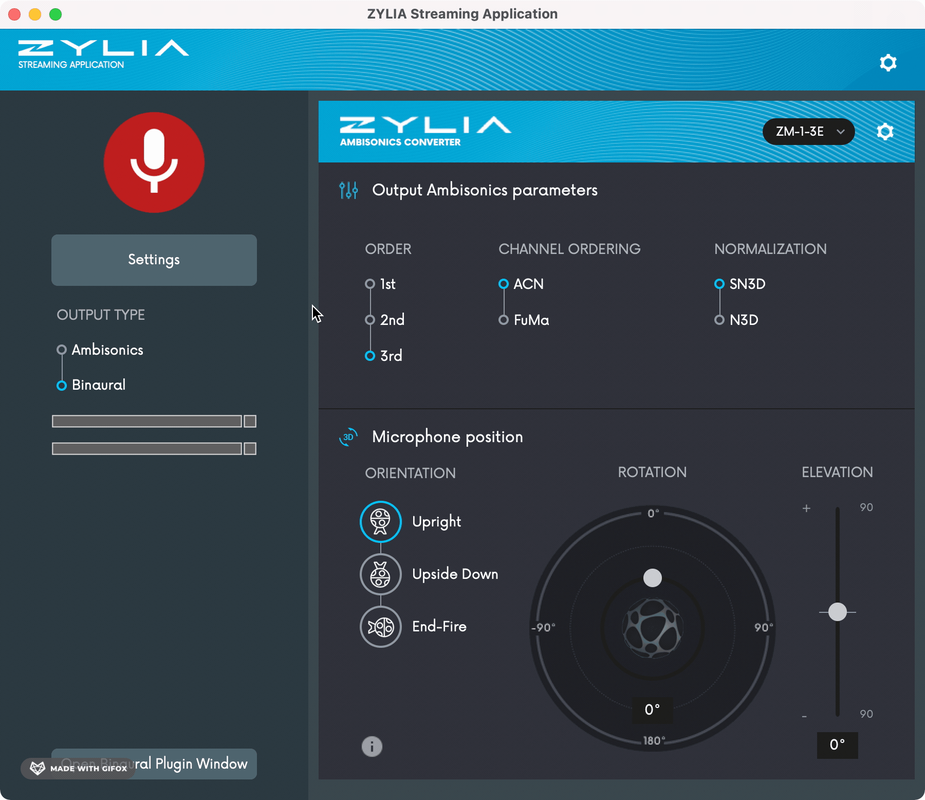

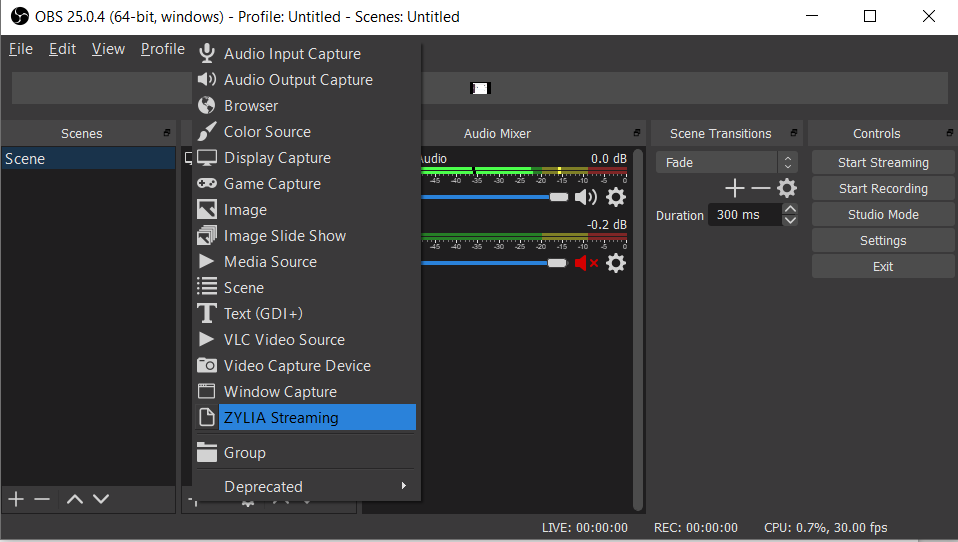

With this version, we fixed the problem with streaming via the OBS plugin, when OBS is in stereo mode. We are happy to announce the very first release of ZYLIA Streaming Application v1.0 for macOS. This is our new solution for quick and easy setting-up live streaming of your music performance in the Ambisonics format. The core of this application is ZYLIA Ambisonics Converter that converts the ZM-1 multi-channel recordings into Higher Order Ambisonics (HOA). With this tool, you can stream your audio content directly in HOA format (1st, 2nd, and 3rd order) or Binaural format by using an additional binauralization plugin. It can be easily configured with streaming software like OBS (Open Broadcaster Software) that allows combining your audio stream with a video stream and transmitting directly to most of the well-known multimedia platforms (e.g. YouTube, Facebook, Twitch, etc.). Also in addition to this application, we provide the beta OBS Plugin which is able to get direct output from ZYLIA Streaming Application, without using any virtual sound card. ZYLIA Streaming Application will be available as an addition to all packages that contain the ZYLIA Ambisonics Converter plugin, eg. the ZYLIA PRO Ambisonics package. Main features:

#zylia #streaming #3Daudio #Ambisonics #binaural #live #music

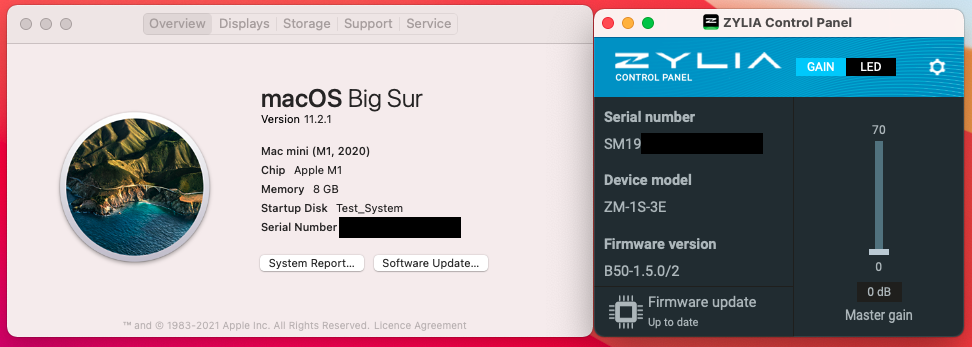

We are happy to announce the new release of ZYLIA ZM-1 drivers for macOS. This driver supports the latest Apple computers with an M1 processor. As it is still kernel extension, you may need to Enable System Extension for M1 systems. This instruction is available on the Apple webpage and also here. Also, all other ZYLIA Software is able to run on this kind of machine in Intel compatibility mode with Rosetta 2. ( https://support.apple.com/en-us/HT211861)

We are happy to announce the new release of ZYLIA Studio (v2.1).

With this version, we introduce the following features:

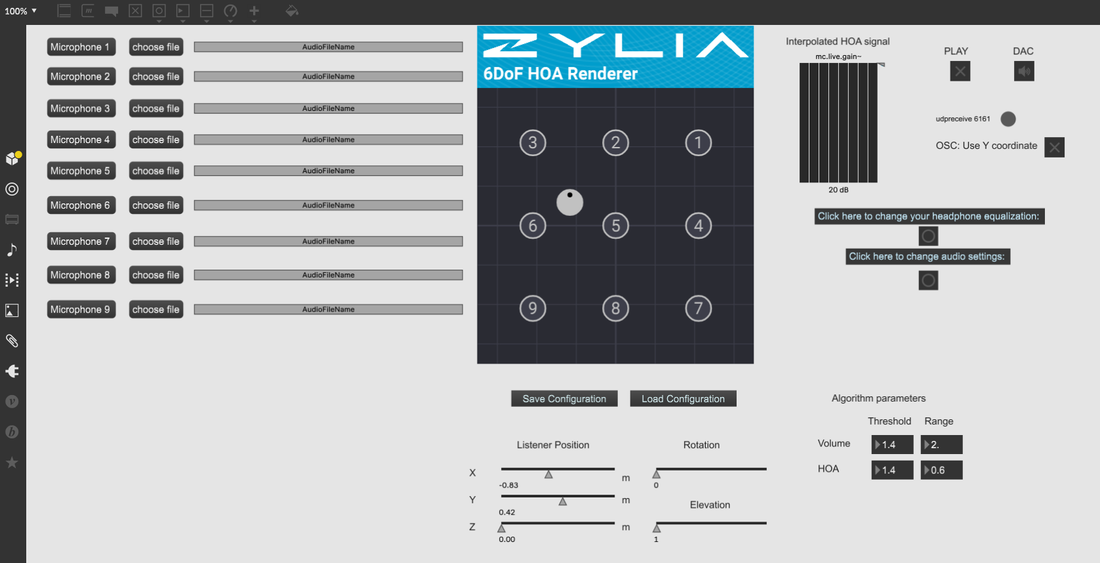

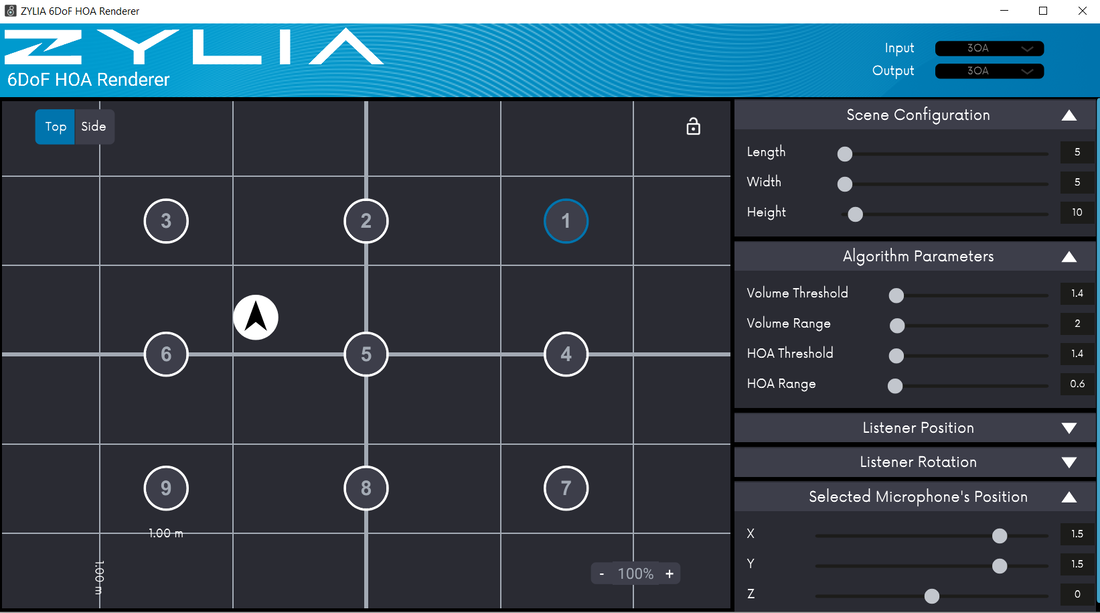

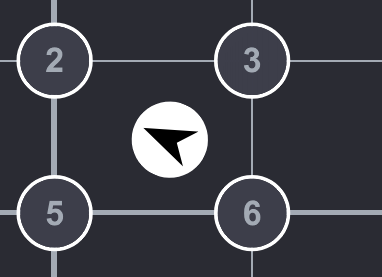

We are happy to announce the release of ZYLIA 6DoF HOA Renderer for Max MSP v2.0. (macOS, Windows). This software is a key element of ZYLIA 6DoF Navigable Audio system. It allows you to reproduce the sound field in a given location based on Ambisonics signals recorded with ZM-1S microphones. The plugin works in Max/MSP environment so you can use this tool directly in your project. Please refer to the provided example project with our plugin and the manual for ZYLIA 6DoF HOA Renderer. The newest ZYLIA 6DoF HOA Renderer for Max/MSP has a lot of improvements. The new User Interface can be accessed in a separate window – it allows you to set up the whole configuration directly in the plugin, without using the message mechanism of Max/MSP. The UI allows you also to lock the microphones’ positions, preventing the unintentional change of the scene configuration.

The next thing is an increased number of supported signals, right now you can pass up to 30 HOA signals to the plugin, and create a 6DoF experience on much larger scenes. If you would like to test this plugin, you can also use a 7-day free trial, and play around with our 6DoF audio rendering algorithm. The test recording data for the plugin can be found on our webpage. We are happy to announce the new release of ZYLIA ZM-1 drivers for macOS (v2.9.1). This driver supports the latest release of macOS 11.1 Big Sur.

We are happy to announce the new release of ZYLIA ZM-1 drivers for macOS and Linux. MacOS driver v2.9.0

Linux driver v2.5.0

by Pedro Firmino This tutorial is based on the solution developed by professor Angelo Farina for preparing a 360 video with 3rd Order audio (source http://www.angelofarina.it/Ambix+HL.htm). In this adaptation, we will show you how to create a 360 video with 3rd Order Ambisonics audio using:

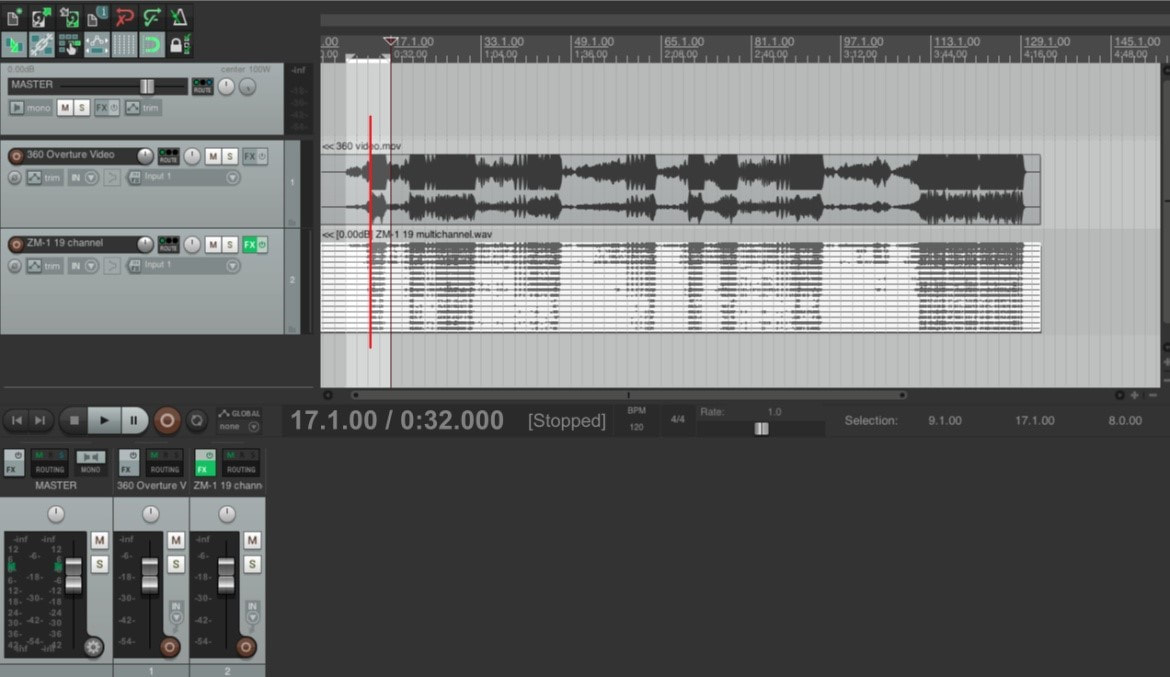

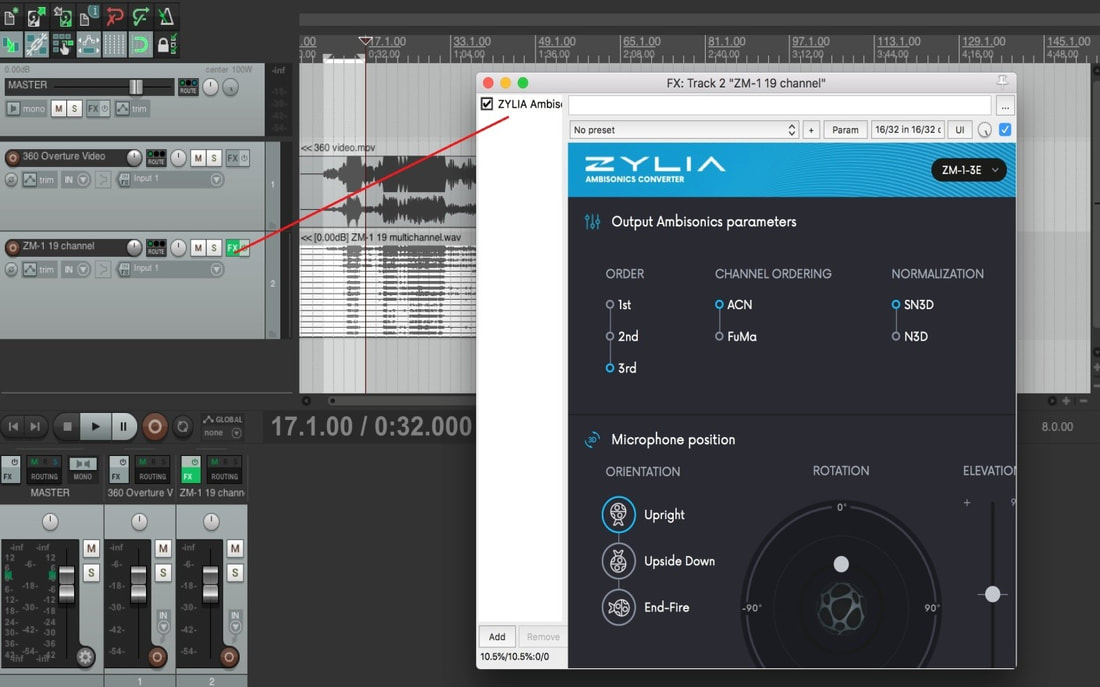

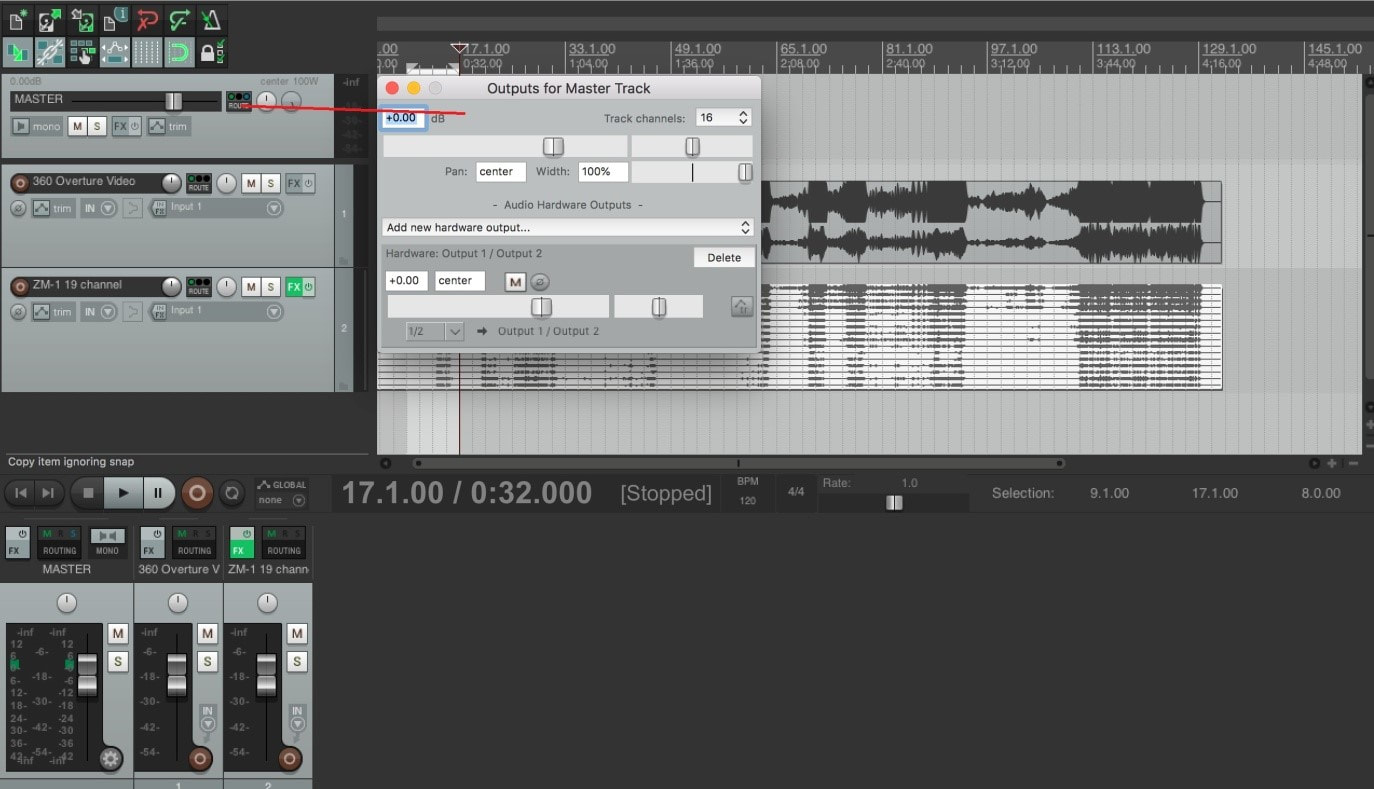

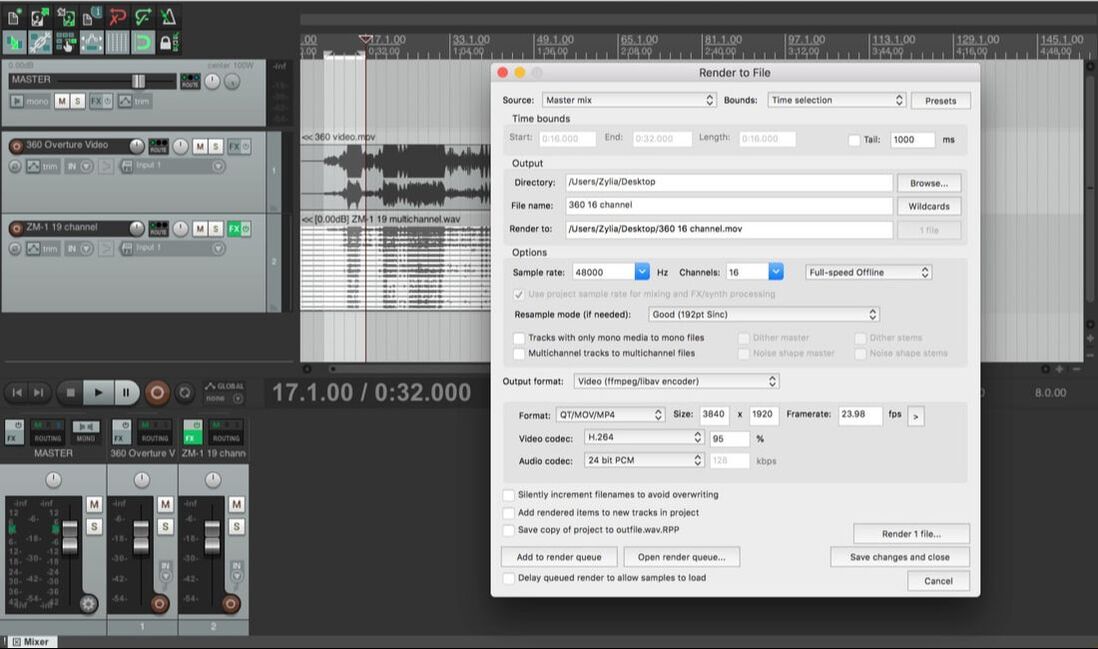

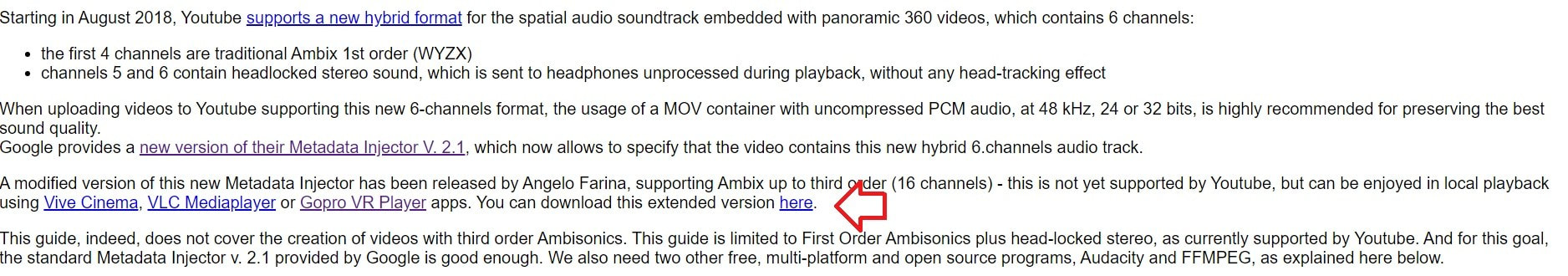

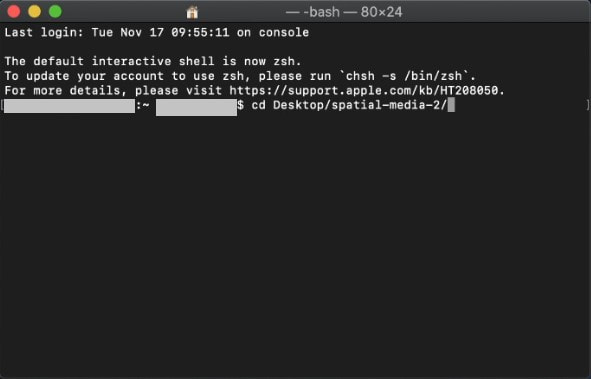

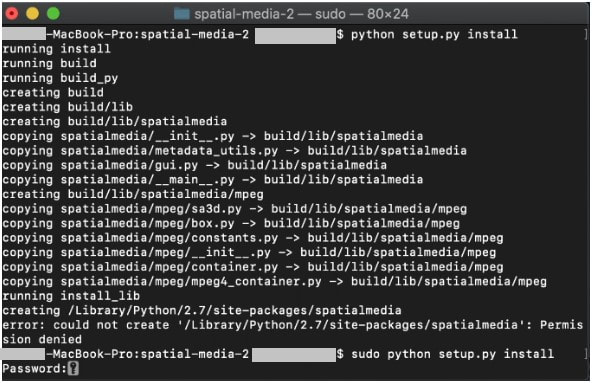

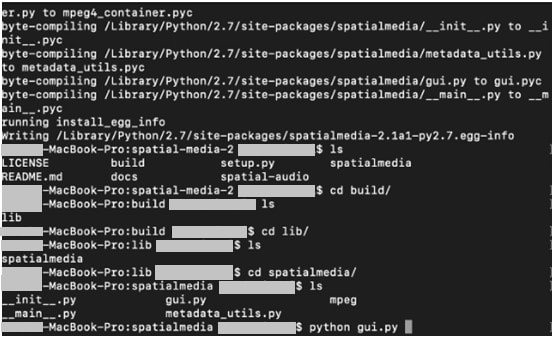

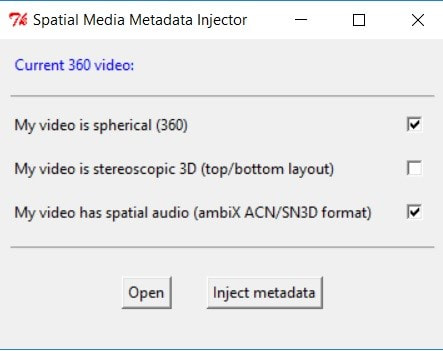

This tutorial consists in 2 parts: A: Preparing the 360 content with 16 channels B: Injecting metadata using Spatial Media Injector version, modified by Angelo Farina. At the moment, only HOAST library ( https://hoast.iem.at/ ) is the only platform which allows online video playback of 3rd Order Ambisonics and therefore the content created from this tutorial is meant to be watched locally using VLC player. For this tutorial, basic Python knowledge is advised. For preparing a 360 video with 1st order Ambisonics, visit the link: https://www.zylia.co/blog/how-to-prepare-a-360-video-with-spatial-audio PART A 1. As usual, start by recording your 360 video with the ZYLIA ZM-1 microphone and remember to have the front of the ZM-1 aligned with the front of the 360 camera. 2. After recording, import the 360 video and the 19 Multichannel audio file into Reaper. Syncronize the audio and video. 3. On the ZM-1 audio track, insert ZYLIA Ambisonics Converter and select 3rd Order Ambisonics. This will decode your 19 multichannel track into 16 channels (3rd Order Ambisonics). 4. On the Master track, click on the Route button, On the track channels, select 16. Now you are receiving the signal from the 16 channels from the audio track. 5. Once the video is ready for exporting, click File – Render. As for the settings: Sample rate: 48000 Channels: 16 (click on the space and manually type 16) Output format: Video (ffmpeg/libav encoder) Size: 3840 x 1920 (or Get width/height/framerate from current video item Format: QT/Mov/MP4 Video Codec: H.264 Audio Codec: 24 bit PCM Render the video. PART B After having the 360 video with 16 channels, it is necessary to inject metadata for Spatial Audio. In order to do this, Python is required. Python is preinstalled in macOS but you can download Python 2.7 version here: https://www.python.org/download/releases/2.7/ Afterward, download Angelo Farina’s modified version of Spatial Media Metadata Injector, located at: http://www.angelofarina.it/Ambix+HL.htm The next part: 1. With the downloaded file located in your Desktop, run macOS Terminal application. 2. Using “cd” command, go to folder where you have Spatial Media Injector (eg. “cd ~/Desktop/spatial-media-2/”) 3. Run Python script “sudo python setup.py install”. Type your password. After the build is complete, type command: “cd build/lib/spatialmedia” 6. Enter python gui.py and the application should run. With the Spatial Media Metadata Injector opened, simply open the created 360 video file, and check the boxes for the 360 format and spatial audio. Inject metadata and your video will be ready for playback using 3rd Order Ambisonics audio.

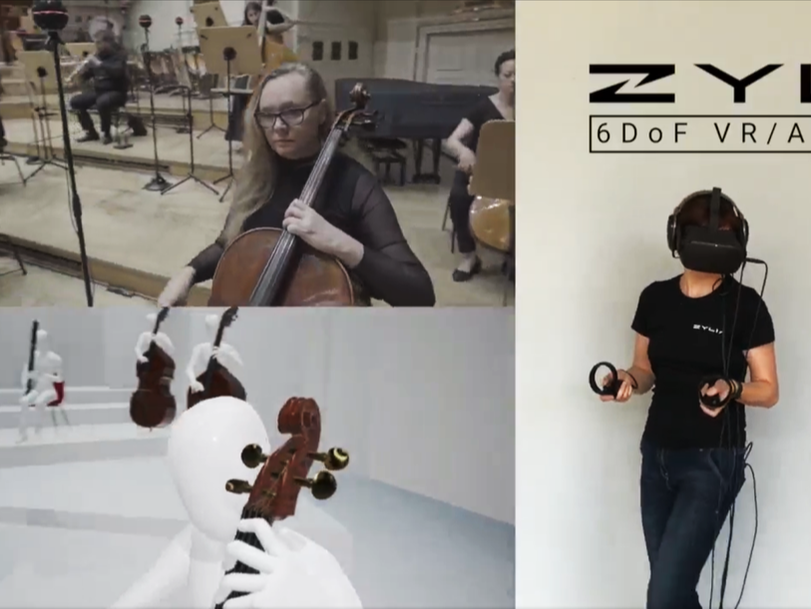

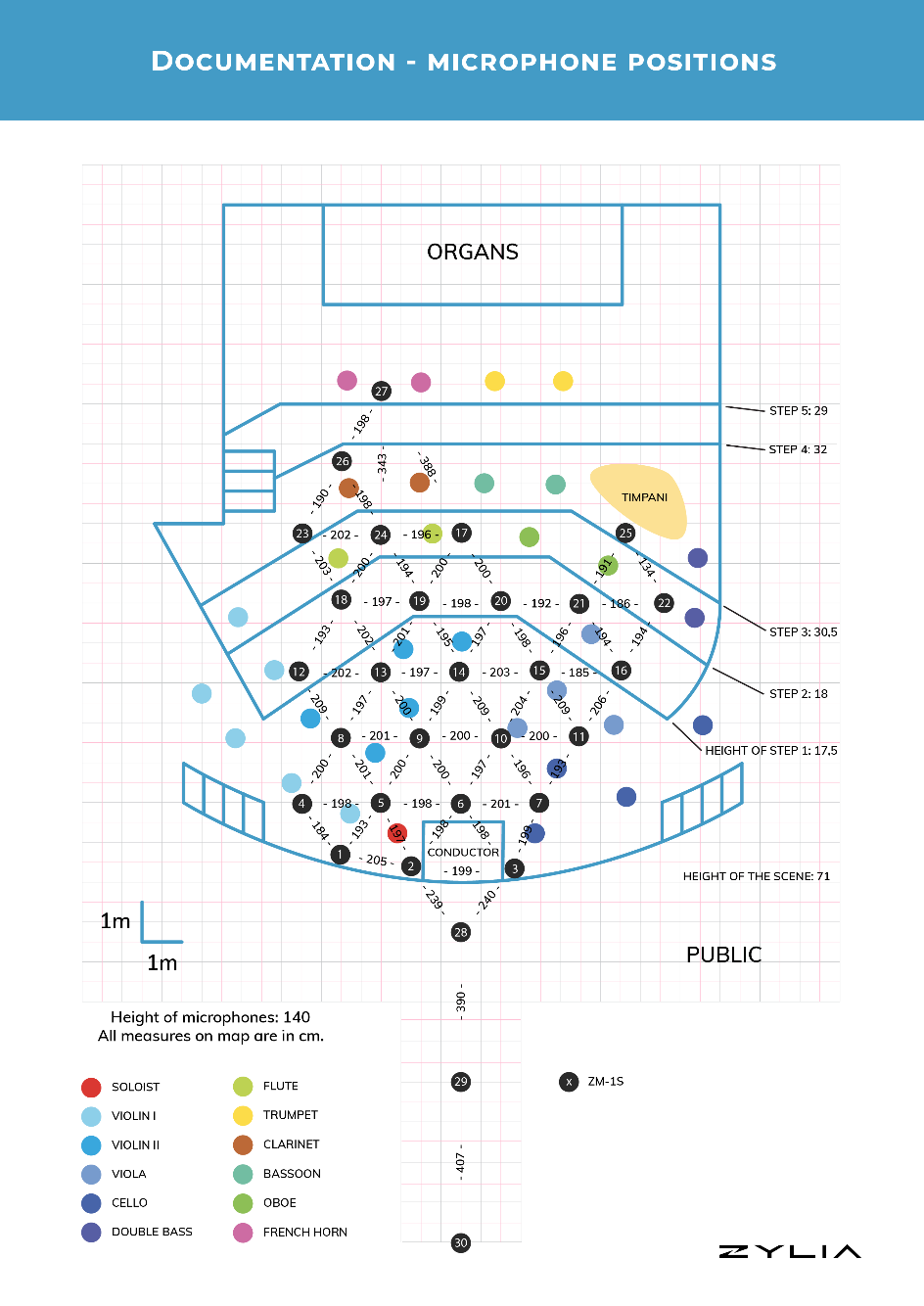

The ideaZylia in collaboration with Poznań Philharmonic Orchestra showed first in the world navigable audio in a live-recorded performance of a large classical orchestra. 34 musicians on stage and 30 ZYLIA 3’rd order Ambisonics microphones allowed to create a virtual concert hall, where each listener can enact their own audio path and get a real being-there sound experience. ZYLIA 6 Degrees of Freedom Navigable Audio is a solution based on Ambisonics technology that allows recording an entire sound field around and within any performance imaginable. For a common listener it means that while listening to a live-recorded concert they can walk through the audio space freely. For instance, they can approach the stage, or even step on the stage to stand next to the musician. At every point, the sound they hear will be a bit different, as in real life. Right now, this is the only technology like that in the world. 6 Degrees of Freedom in Zylia’s solution name refers to 6 directions of possible movement: up and down, left and right, forward and backward, rotation left and right, tilting forward and backward, rolling sideways. In post-production, the exact positions of microphones placed in the concert hall are being mirrored in the virtual space through the ZYLIA software. When it is done, the listener can create their own audio path moving in the 6 directions mentioned above and choose any listening spot they want. Technical information6DoF sound can be produced with an object-based approach – by placing pre-recorded mono or stereo files in a virtual space and then rendering the paths and reflections of each wave in this synthetic environment. Our approach, on the contrary, uses multiple Ambisonics microphones – this allows us to capture sound in almost every place in the room simultaneously. Thus, it provides a 6DoF sound which is comprised only of real-life recorded audio in a real acoustic environment. How was it recorded? * Two MacBooks pro for recording * A single PC Linux workstation serving as a backup for recordings * 30 ZM-1S mics – 3rd order Ambisonics microphones with synchronization * 600 audio channels – 20 channels from each ZM-1S mic multiplied by 30 units * 3 hours of recordings, 700 GB of audio data Microphone array placementThe placement of 30 ZM-1S microphones on the stage and in front of it.

Recording processTo be able to choose the best versions of performances, the Orchestra played nine times the Overture and eight times the Aria with three additional overdubs. Simultaneously to the audio recording, we were capturing the video to document the event. The film crew placed four static cameras in front of the stage and on the balconies. One cameraman was moving along the pre-planned path on the stage. Additionally, we have put two 360 degrees cameras among musicians. Our chief recording engineer made sure that everything was ready – static cameras, moving camera operator, 360 cameras and recording engineers – and then gave a sign to the Conductor to begin the performance. When the LED rings on the 30 arrays had turned red everybody knew that the recording has started. DataA large amount of data make it possible to explore the same moment in endless ways. Recording all 19 takes of two music pieces resulted in storing 700 GB of audio. The entire recording and preparation process was documented by the film with several cameras. Around 650 GB of the video has been captured. In total, we have gathered almost 1,5 TB of data. Post-processing and preparing data for the ZYLIA 6DoF rendererFirst, we had to prepare the 3D model of the stage. The model of the concert hall was redesigned, to match the dimensions in real life. Then, we have placed the microphones and musicians according to the accurate measurements. When this was done, specific parameters of the interpolation algorithm in the ZYLIA 6DoF HOA Renderer had to be set. The next task was the most difficult in post-production - matching the real camera sequences with the sequences from the VR environment in Unreal Engine. After this painstaking process of matching the paths of virtual and real cameras, a connection between Unreal and Wwise was established. In this way, we had the possibility to render the sound of the defined path in Unreal - just as if someone was walking there in VR. Last, but not least - was to synchronize and connect the real and virtual video with the desired audio. The outcomeThe outcome of this project is presented in “The Walk Through The Music” movie, where we can enter the music spectacle from the audience position and move around artists on the stage. You can also watch the “Making of” movie to get more detailed information on how the setup looked like. Want to learn more about volumetric audio recording? Contact our experts: |

Categories

All

Archives

August 2023

|

|

© Zylia Sp. z o.o., copyright 2018. ALL RIGHTS RESERVED.

|

RSS Feed

RSS Feed