0 Comments

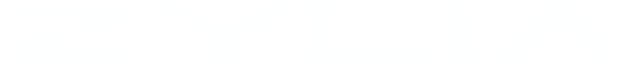

We are happy to announce the new release of the ZYLIA Studio in version 2.1.1 for Windows and macOS. With this version, we introduce the Chinese language of the application. China is a huge market full of great musicians and sound engineers. Especially for them, we have prepared the Chinese language version of our hit software. ZYLIA Studio is an application dedicated to working with ZM-1 3rd order Ambisonics microphone array which provides an easy workflow of one-mic multi-track recording, AUTOMIXING, and manual mixing of tracks to prepare a balanced recording. Additionally, few small bugs were fixed connected with the stability of the energy map.

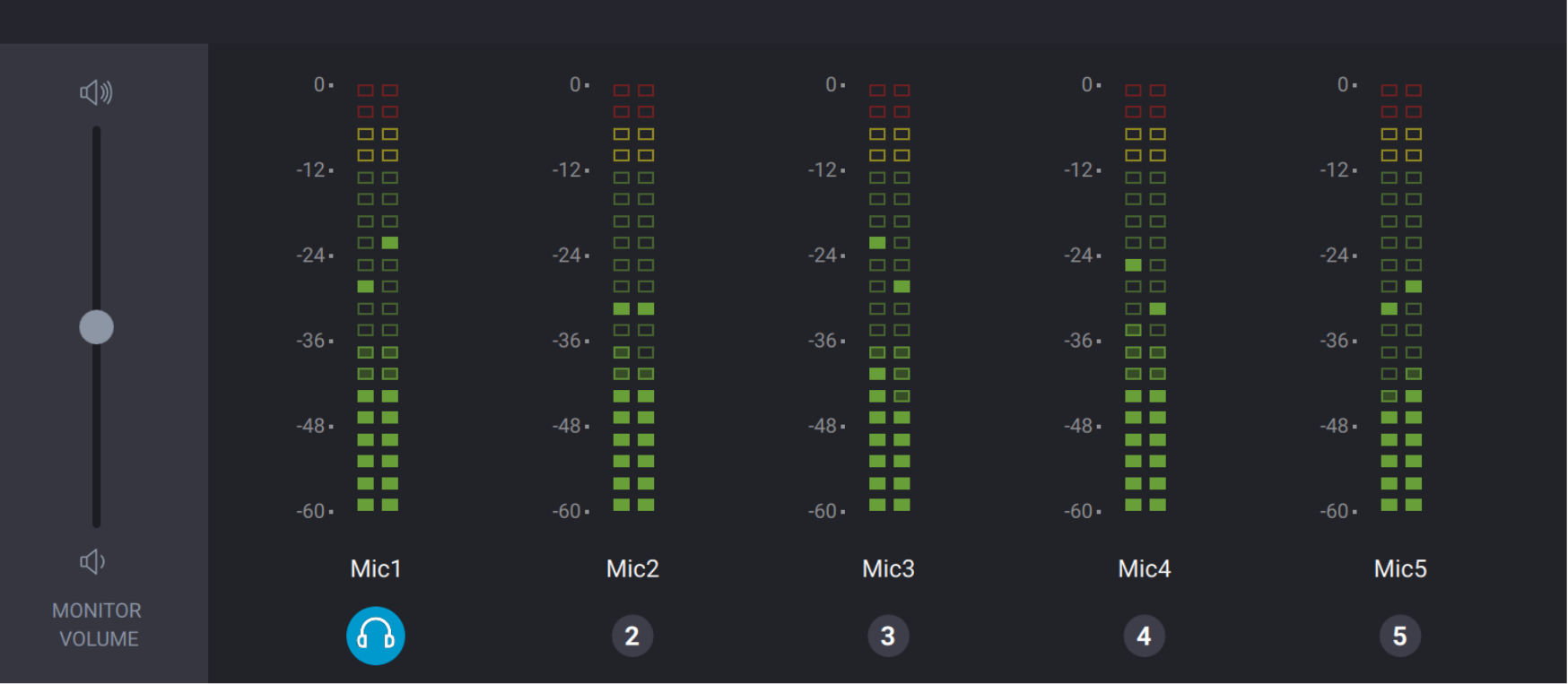

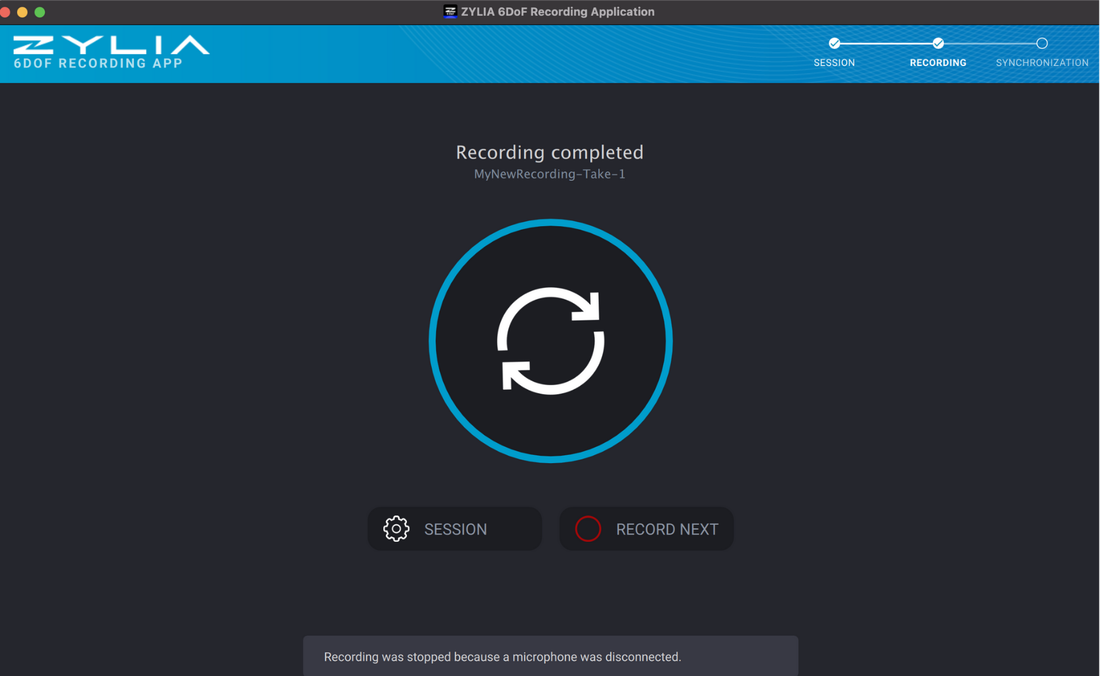

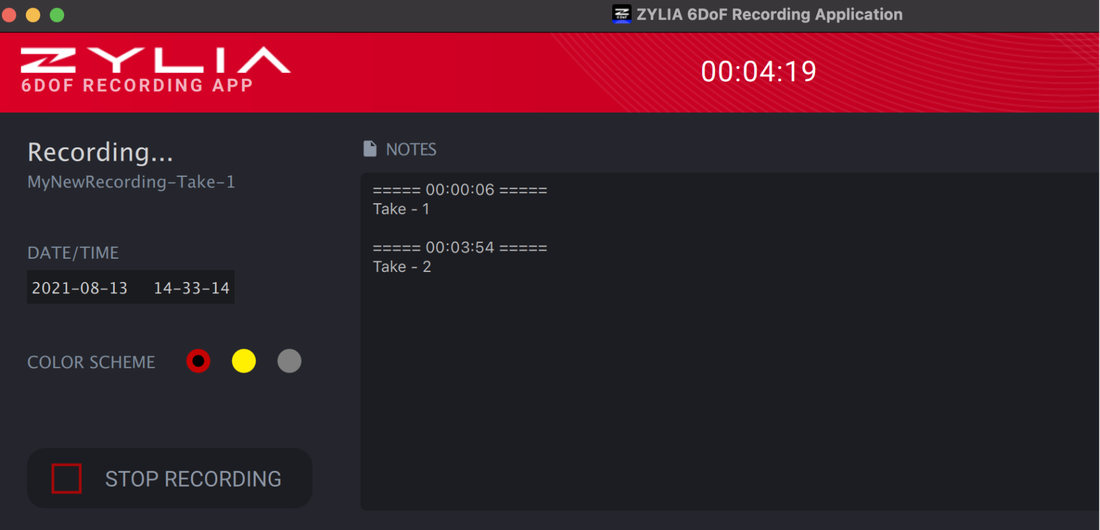

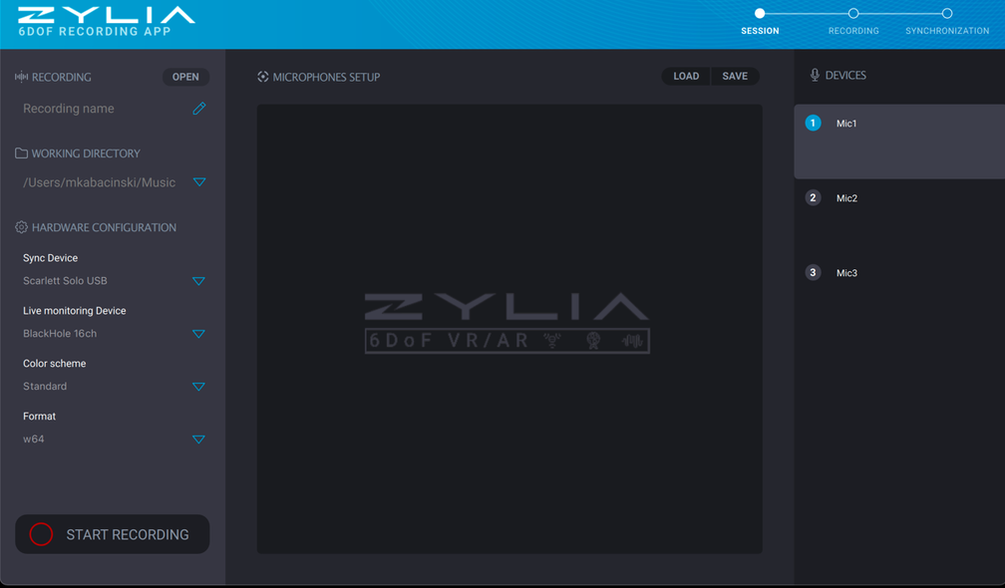

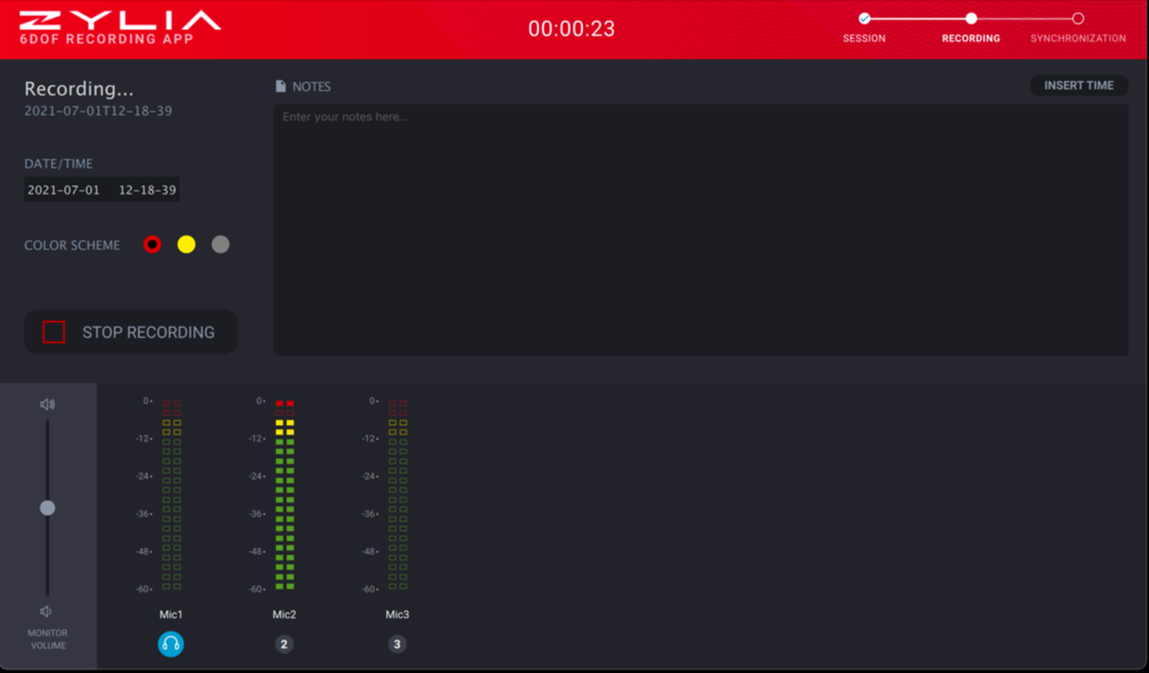

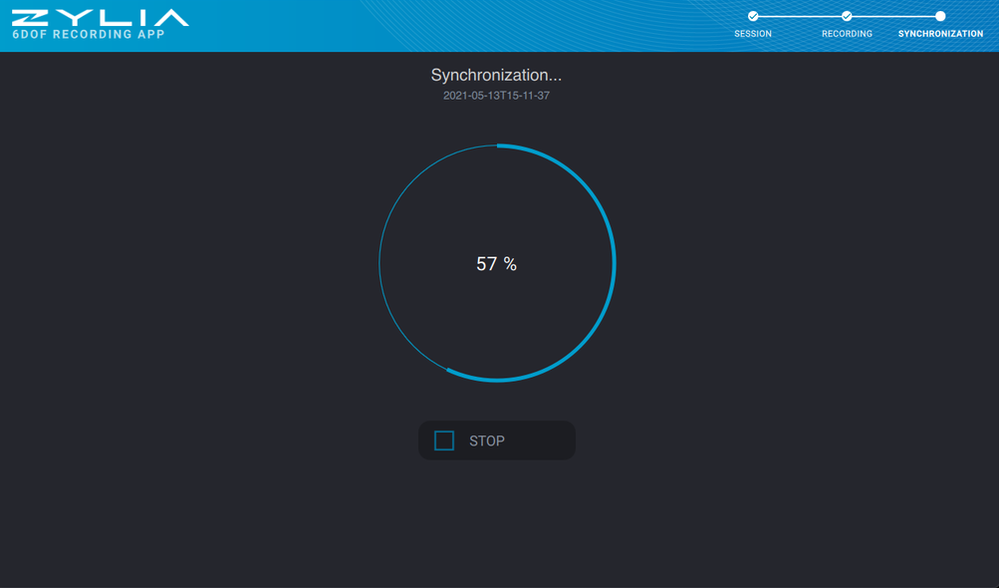

RECORDING MULTIPOINT 3D AUDIO HAS NEVER BEEN EASIER! ZYLIA 6DoF RECORDING APPLICATION V.1.0.08/20/2021 Tiger Woods once said that no matter how good you get, you can always get better, and that's the exciting part. Here at Zylia, we couldn’t agree more, therefore, we are thrilled to present you a new, and more importantly, improved version of our ZYLIA 6DoF Recording Application. Intuitiveness, efficiency, and a great look that’s how in a few words we can describe the ZYLIA 6DoF Recording Application, which we released just recently. Let's take a closer look at it together! For those, a little less familiar with our products – ZYLIA 6DoF Recording Application is a part of the ZYLIA 6 Degrees of Freedom Navigable Audio solution, which is the most advanced technology on the market for recording and post-processing multipoint 3D audio. The solution is based on multiple Higher Order Ambisonics microphones which capture large sound-scenes in high spatial resolution and a set of software for recording, synchronizing signals, converting audio to B-Format, and rendering HOA files. This new ZYLIA 6DoF Recording Application has replaced the command-line toolkit for recording and synchronization. Thanks to a clear and comfortable graphical user interface, working with the app is fast and easy. We’ve also added a bunch of new features, which significantly improved the user experience. GET OFF TO A GOOD STARTZYLIA 6DoF Recording Application was designed as a tool for capturing 3D audio content from multiple ZYLIA ZM-1S microphones (up to 3000 channels on a single computer). To make the entire process smoother, we’ve introduced a set of very useful options:

BETTER THAN EVER Although the command-line toolkit did its job very well, our drive to excellence and constantly paying attention to the opinions of our customers resulted in developing a new, better version of the Recording Application. During the optimization, not only we kept the old, proven features but also added new ones and took care of the visual aspect of the interface. Here are all the great improvements we introduced:

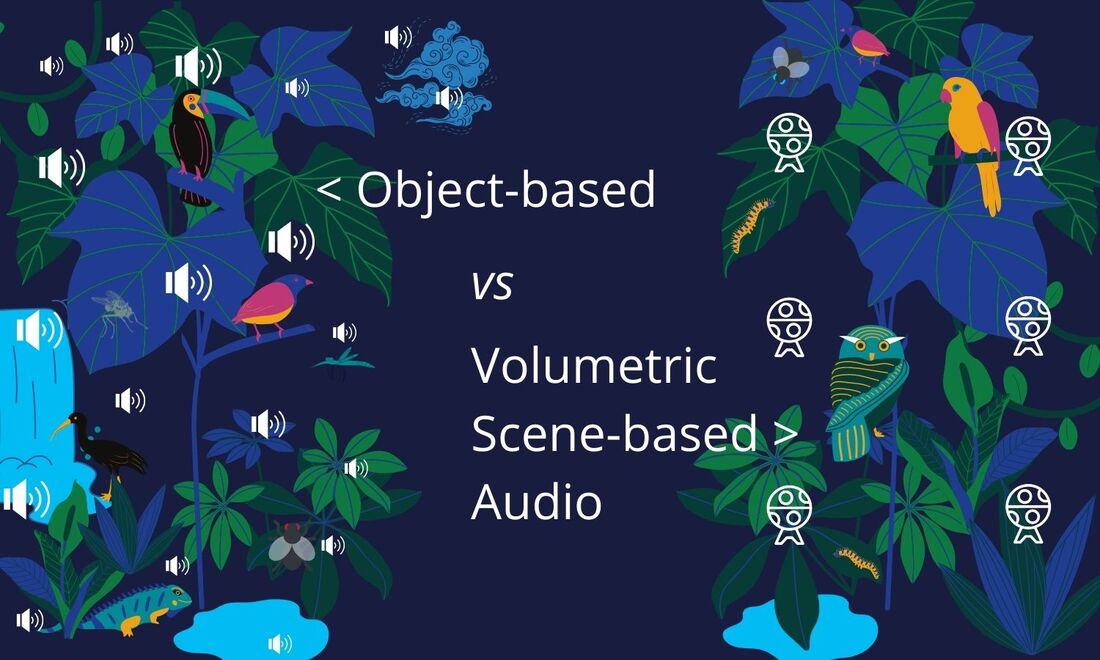

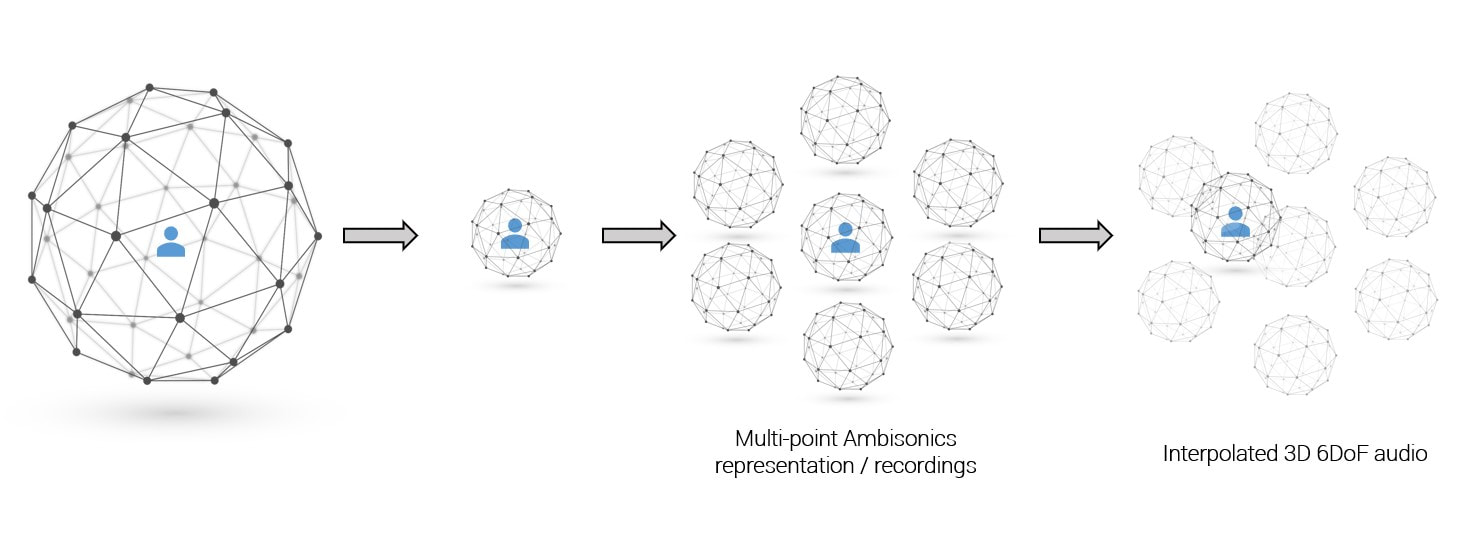

THE ICING ON THE CAKE While working on the new ZYLIA 6DoF Recording Application, we have not forgotten to make the interface aesthetically pleasing and familiar to our users, granting great usability and a joyful experience. See for yourself: Efficient Volumetric Scene-based audio with ZYLIA 6 Degrees of Freedom solutionWhat is the difference between Object-based audio (OBA) and Volumetric Scene-based audio (VSBA)?OBA The most popular method of producing a soundtrack for games is known as Object-based audio. In this technique, the entire audio consists of individual sound assets with metadata describing their relationships and associations. Rendering these sound assets on the user's device means assembling these objects (sound + metadata) to create an overall user experience. The rendering of objects is flexible and responsive to the user, environmental, and platform-specific factors [ref.]. In practice, if an audio designer wants to create an ambient for an adventure in a jungle, he or she needs to use several individual sound objects, for example, the wind rustling through the trees, sounds of wild animals, the sound of a waterfall, the buzzing of mosquitoes, etc. The complexity associated with Object-based renderings increases with the number of sound objects. This means that the more individual objects there are (the more complex the audio scene is) the higher is the usage of the CPU (and hence power consumption) which can be problematic in the case of mobile devices or limitations of the bandwidth during data transmission. VSBA A complementary approach for games is Volumetric Scene-based audio, especially if the goal is to achieve natural behavior of the sound (reflections, diffraction). VSBA is a set of 3D sound technologies based on Higher-Order Ambisonics (HOA), a format for the modeling of 3D audio fields defined on the surface of a sphere. It allows for accurate capturing, efficient delivery, and compelling reproduction of 3D sound fields on any device (headphones, loudspeakers, etc.). VSBA and HOA are deeply interrelated; therefore, these two terms are often used interchangeably. Higher-Order Ambisonics is an ideal format for productions that involve large numbers of audio sources, typically held in many stems. While transmitting all these sources plus meta-information may be prohibitive as OBA, the Volumetric Scene-based approach limits the number of PCM (Pulse-Code Modulation) channels transmitted to the end-user as compact HOA signals [ref.]. ZYLIAs interpolation algorithm for 6DoF 3D audio Creating a sound ambience for an adventure in a jungle through Volumetric Scene-based audio, can be as simple as taking multiple HOA microphones to the natural environment that produces the desired soundscape and record an entire 360° audio-sphere around devices. The main advantage of this approach is that the complexity of the VSBA rendering will not increase with the number of objects. This is because the source signals are converted to a fixed number of HOA signals, uniquely dependent on the HOA order, and not on the number of objects present in the scene. This is in contrast with OBA, where rendering complexity increases as the number of objects increases. Note that Object-based audio scenes can profit from this advantage by converting them to HOA signals i.e., Volumetric Scene-based audio assets. To summarizing, the advantages of the Volumetric Scene-based audio approach affecting the CPU and power consumption are:

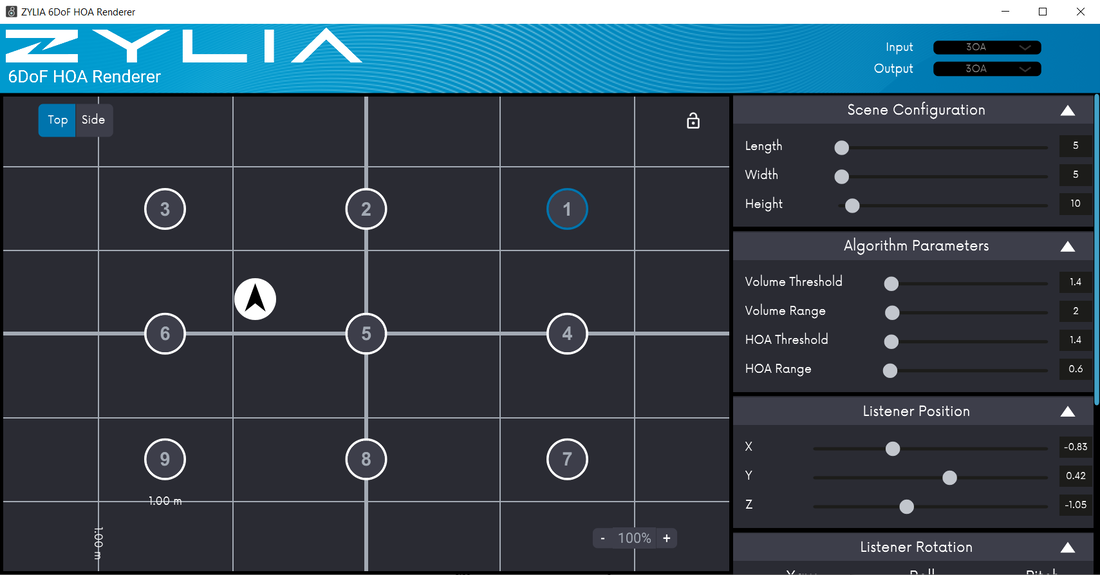

Zylia 6 Degrees of Freedom Navigable Audio One of the most innovative and efficient tools for producing Volumetric Scene-based audio is ZYLIA 6 Degrees of Freedom Navigable Audio solution. It is based on several Higher Order Ambisonics microphones which capture large sound-scenes in high resolution, and a set of software for recording, synchronizing signals, converting audio to B-Format, and rendering HOA files. The Renderer can be also used independently from the 6DoF hardware – to create navigable 3D assets for audio game design. ZYLIA 6 DoF HOA Renderer is a MAX/MSP plugin available for MAC OS and Windows. It allows processing and rendering ZYLIA Navigable 3D Audio content. With this plugin users can playback the synchronized Ambisonics files, change the listener’s position, and interpolate multiple Ambisonics spheres. The plugin is also available for Wwise, allowing developers to use ZYLIA Navigable Audio technology in various game engines. Watch the comparison between Object-based audio and Volumetric Scene-based audio produced with Zylia 6 Degrees of Freedom Navigable Audio solution. Notice how the 6DoF approach reduces the CPU during sound rendering. Volumetric Scene-based audio and Higher Order Ambisonics can be used for many different purposes, not only for creating soundtracks for games. This format is very efficient when producing audio for:

#zylia #gameaudio #6dof #objectbased #scenebased #audio #volumetric #gamedevelopment #GameDevelopersConference #GDC2021 #GDC Want to learn more about multi-point 360 audio and video productions? Contact our Sales team:We are happy to announce the new release of the ZYLIA 6DoF Recording Application in version 1.0.0 for Linux and macOS. This application is a part of the ZYLIA 6DoF Navigable Audio solution (ZYLIA 6DoF VR/AR set). It replaces the command line toolkit for the recording and synchronization process. For your comfort, this application has a graphical user interface, so there is no need to use the command line anymore. This application offers all features of the ZYLIA 6DoF Recording Toolkit such as:

Additionally, there are added few new features.

Configure you session. Make the recording. Synchronize raw audio files. The command-line application will be also available but will not be further developed.

For this workflow you will require:

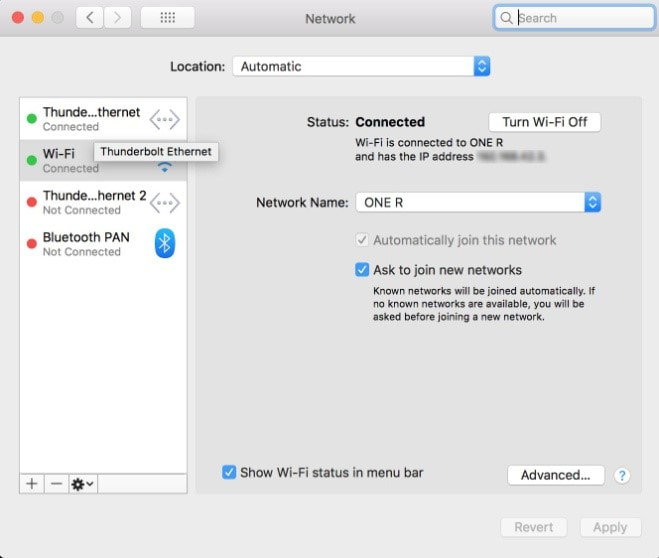

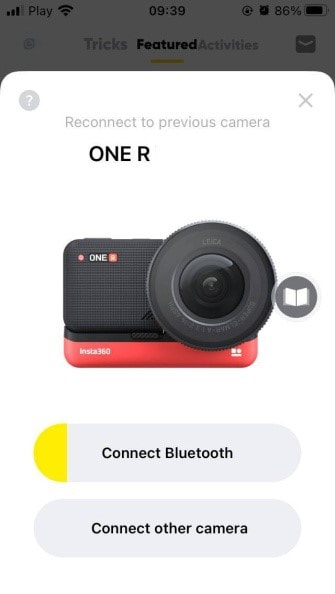

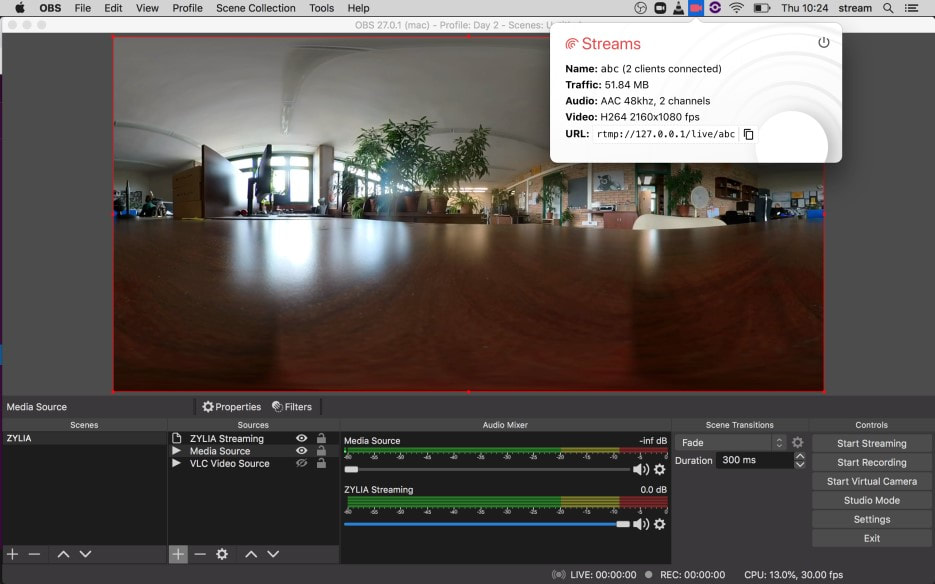

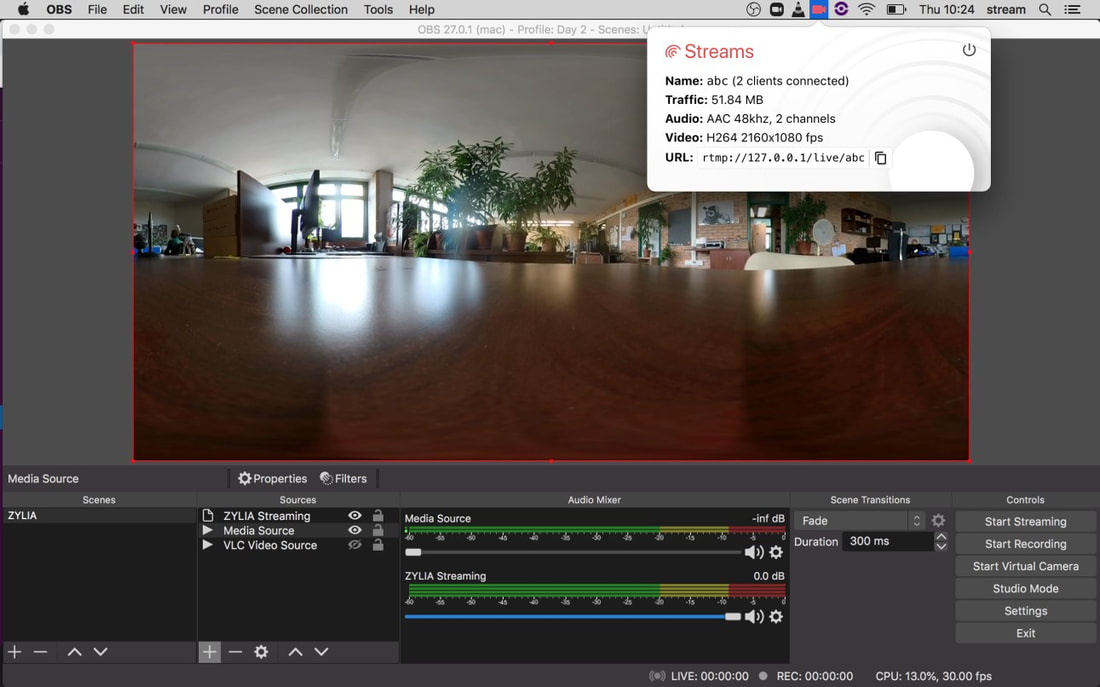

This project consists of 3 main parts: A. Receiving a live 360° video from the Insta360 to OBS B. Receiving Ambisonics audio from the ZM-1microphone to OBS. C. Configuring Facebook for live stream of 360° video with Ambisonics audio A. Receiving a live 360° video from the Insta360 to OBS 1. Turn On the Insta360 camera and connect your computer Wi-Fi to the corresponding Insta360 Wi-Fi network. 2. Simultaneously, connect your computer to your local ethernet using an ethernet cable. On your network preferences you should be connected with Ethernet and Wi-Fi. On step number 4 you will need the IP address for the Insta360 Wi-Fi referred on your network preferences. 3. On your MacOS, open the application Local RTMP server (https://github.com/sallar/mac-local-rtmp-server). This simple application will be used to receive a live stream from the Insta360 camera. It also provides you the local host address for OBS.

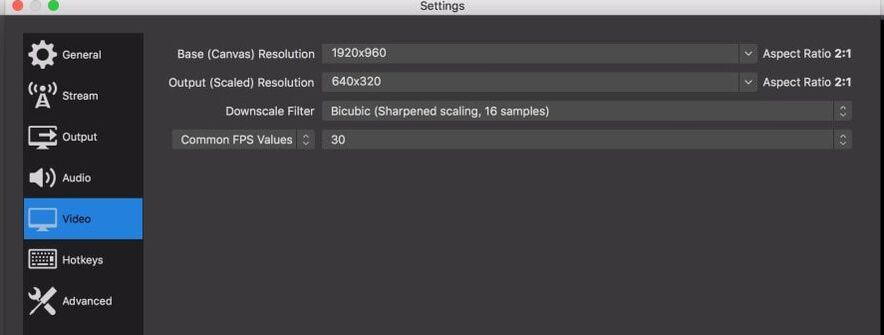

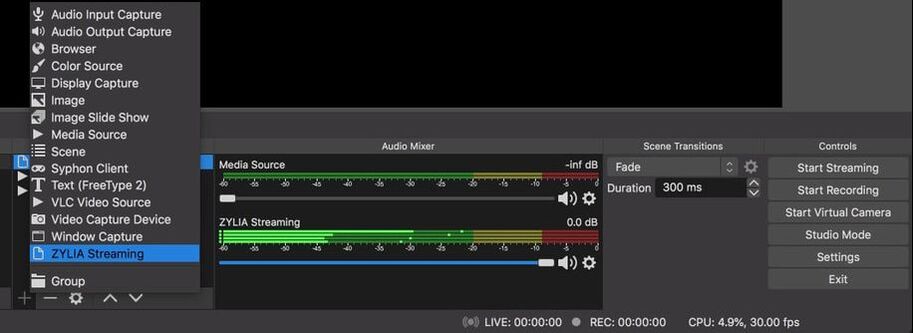

Start streaming from the Insta360. The Local RTMP server application icon should now turn red as an indication of receiving the stream. 5. Open OBS and setup your project:

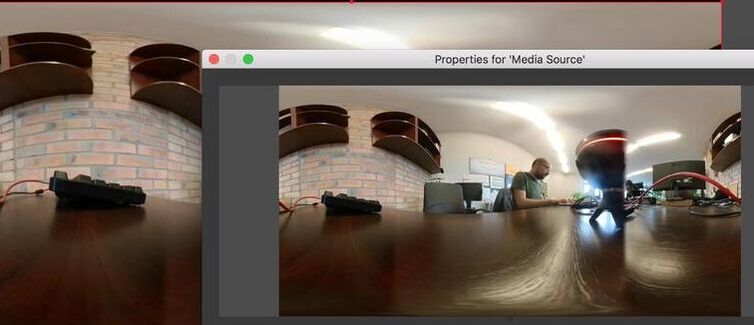

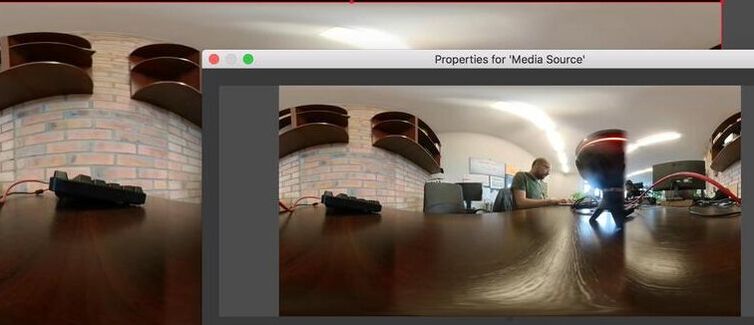

6. To receive the video stream from the Insta360 to OBS:

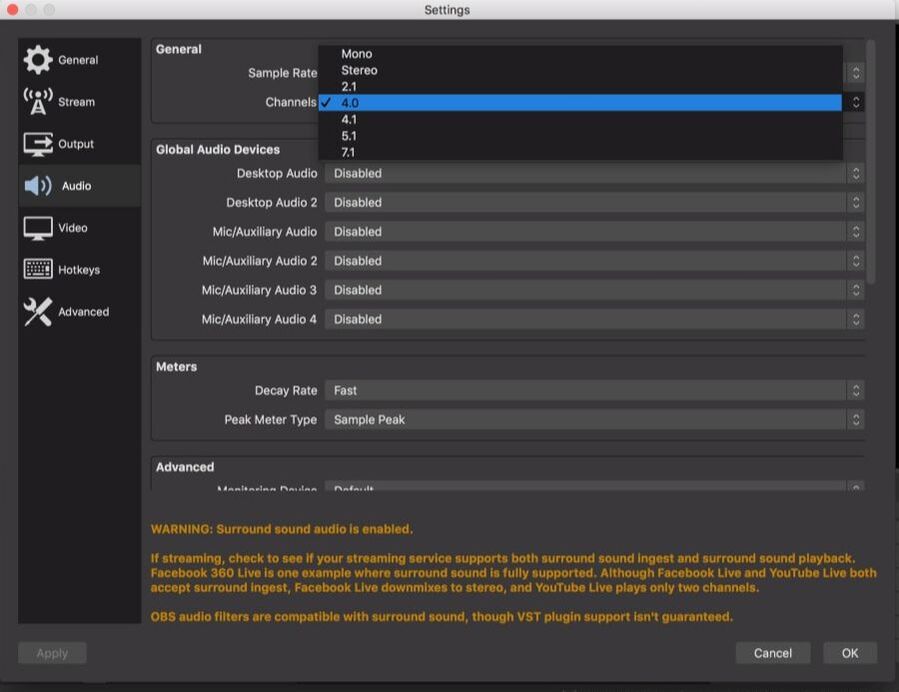

After adding the source, you should be receiving a 360° video from the Insta360 into OBS. B Receiving Ambisonics audio from the ZM-1microphone to OBS. 7. Connect your ZM-1 microphone to your computer. Open ZYLIA Streaming application.

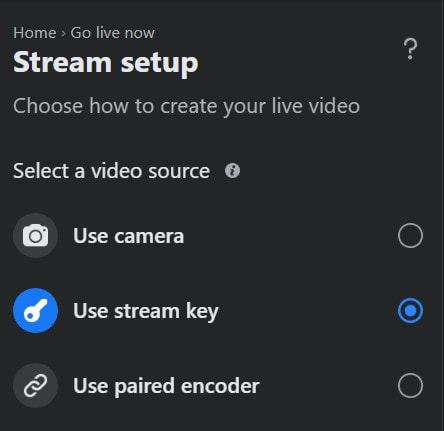

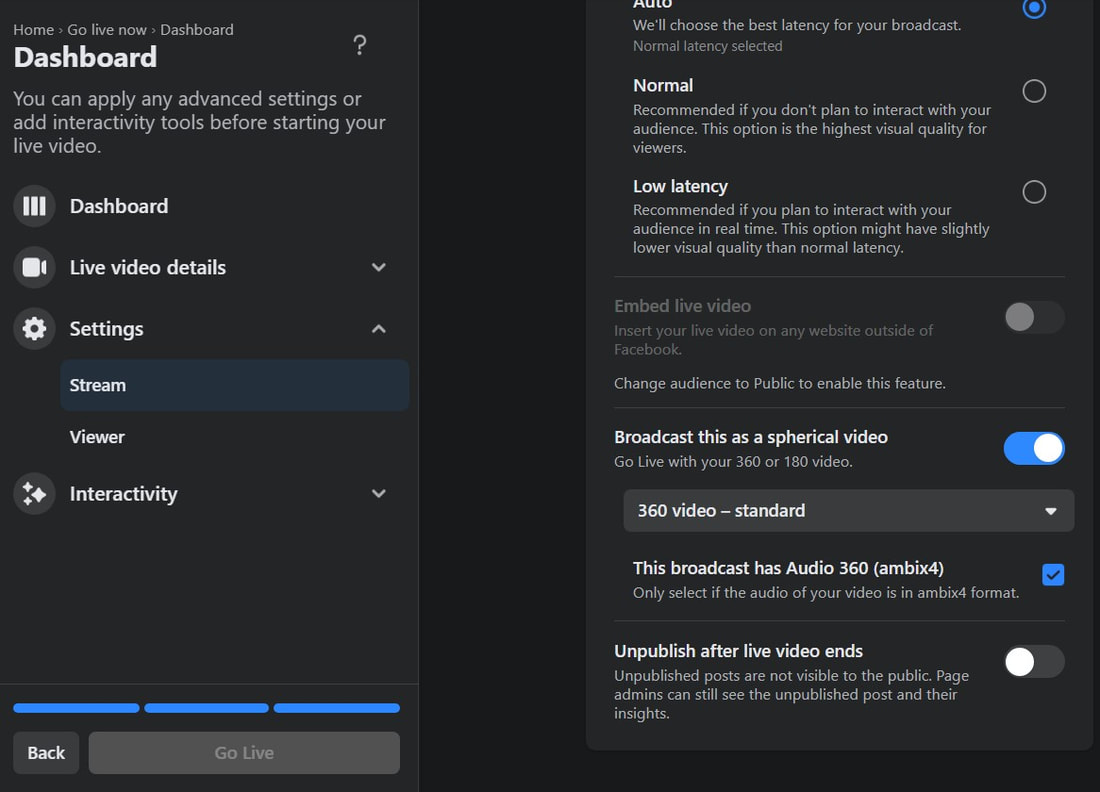

8. In OBS, add source select ZYLIA Streaming application. You are now receiving 1st Order Ambisonics audio (4 channels) and a 360° video into OBS. C Setup Facebook for livestreaming On the Settings – Stream, enable “Broadcast this as a spherical video” and also “This broadcast has Audio 360 (ambix4)”. 10. In OBS press “Start Streaming”. You are now live!

We are happy to announce the new release of ZYLIA ZM-1 drivers for Windows 10 (v2.1.0). Version for Windows 7 will be released in the future.

New features:

Bug fixes:

Some of you, who came across the term ‘3D sound’, probably are wondering what it actually is? Intuitively, we may understand it by analogy to 3D space, which as we know is made up of three dimensions: width, depth, and height. In real life, any position in space is characterized by these dimensions. Now imagine, that you are outside, for example in a park, full of various sounds. You can hear children laughing on the playground behind you; a dog is barking on your left, a couple of people are talking while sitting on a bench in front of you – you can hear their voices more and more clearly when you move towards them. We may say that you are in the 3-dimensional sound space. When you move, the sound you hear changes as well, corresponding to the position of your ears (its intensity, direction, height and even timbre). Reproducing this natural human way of hearing in the recording isn’t easy, however, we already have the technology which allows us to do it – it is binaural and Ambisonics sound.

The most common type of recordings – which you hear while listening to the music on your computer or watching TV –is stereo. You may also come across mono recordings but these have been outdated with the introduction of stereo mixing. Stereo sound basically allows you only to hear if the sound comes from left or right which is the main advantage over the mono-type of audio. Bellow, you may find some great recordings that will allow you to hear the difference between these different formats. We have also summarized their characteristics in the bullet points, to present the information most clearly.

MONO SOUND

STEREO SOUND

BINAURAL SOUND

AMBISONICS SOUND

#zylia #binaural #ambisonics #3Daudio #surround #spatial #sound |

Categories

All

Archives

August 2023

|

|

© Zylia Sp. z o.o., copyright 2018. ALL RIGHTS RESERVED.

|

RSS Feed

RSS Feed