ZYLIA 6 DEGREES OF FREEDOM NAVIGABLE LIVE RECORDED AUDIO

Capture high-quality Higher-Order Ambisonics (HOA) sound fields of multiple points in the recorded scene simultaneously.

Experience the freedom of experimenting with different spatial arrangements of the recording scene.

THE MOST HUMAN-LIKE SOUND EXPERIENCE

Record, create and stream 3D sound and let your audience experience the most realistic, immersive walk through the sound scene.

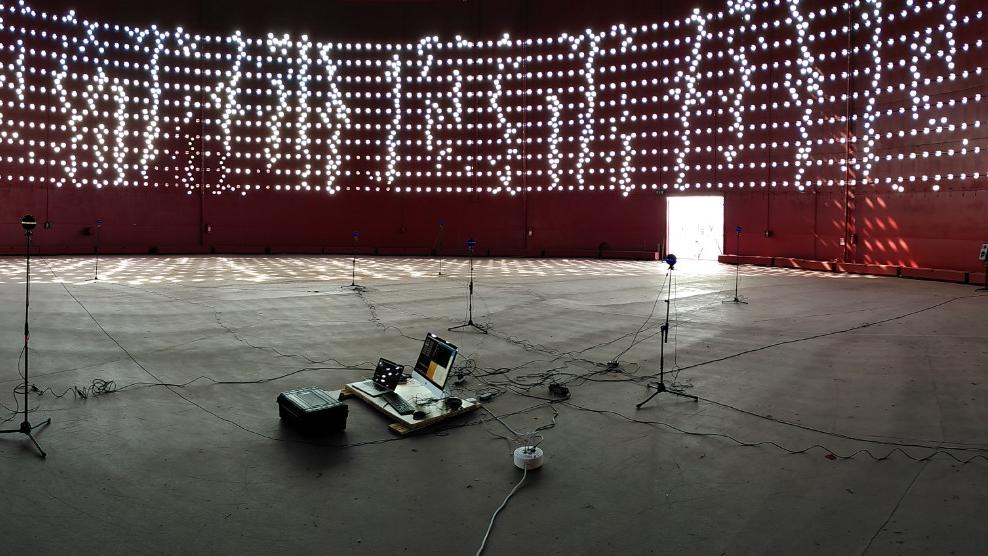

Created specifically to support the production of 6 Degrees of Freedom (6DoF) and VOLUMETRIC 3D audio experiences, the ZYLIA 6DoF Navigable Audio solution allows audio engineers and creatives to place multiple ZYLIA ZM-1S devices within a sound scene and to capture audio that leads to a truly immersive experience with unrestricted freedom of movement through the recorded 3D space

FOR WHOM

VIRTUAL REALITY CREATORS

Offer a truly immersive virtual experience

CULTURAL INSTITUTIONS

Virtually reopen theaters and concert halls to the public

RESEARCH TEAMS

Broaden the horizon of your research with navigable audio

GAMING COMPANIES

Give the players a new immersive sound experience of your games

PASS THE BORDER BETWEEN THE MUSICIANS AND THE AUDIENCE

ZYLIA 6 Degrees of Freedom Navigable Audio is the solution that allows recording an entire sound field around and within any performance imaginable. This means for the listener, that while listening to a 6DoF live concert, they can walk through the audio space freely.

For instance, they can approach the stage, or even step on the stage to stand next to the musicians. The sound they hear will be different at every listening position, as in the case of a real life sound scene.

Zylia’s 6DOF system of multiple synchronized ZM-1S microphone arrays puts serious unprecedented sound capture capabilities in the hands of audio practitioners across many disciplines. What emerges is a new capacity for 3D processing and rendering of the music. We are pleased to be involved in this exciting development towards the enriched experience of listening to music. Immersive Media Lab McGill University, Canada

Working with the Zylia 6DoF VR set was an amazing adventure and journey into the future of audio. It fitted perfectly the concept of Perspective Control Ambisonic Microphone Array (PCAMA) built from four ZYLIA ZM-1S microphones. Using this workflow brings a whole new range of possibilities in the recording postproduction.

THEY TRUSTED US

ASK FOR AN OFFER, WE WILL CONTACT YOU DIRECTLY

Jordan Rudess & Friends

in 3D Audio

Multi-point 360

°

audio and video concert you can watch on your Oculus headset. The most immersive, deep and emotionally engaging 360° music experience.

ZYLIA 6DoF HOA Renderer

The plugin allows for the processing and rendering of 6DoF volumetric audio content and interpolates the sound field between 3rd-order Ambisonics spheres. These sources can be synthetic or recorded with multiple ZM-1S microphones.

Volumetric Audio Concert

The border between the musician and audience was existing for ages. Once you pass this border you are entering a real musical world. This kind of experience is an experience I cannot describe. You have to fill it.

EXAMPLES OF

CUSTOMERS USING VOLUMETRIC AUDIO RECORDING

Aalto Studios: Navigable spatial audio experience with new microphones

Aalto Studios: Spherical microphone arrays can provide a more natural remote presence in audio-visual art installations

RedBull Media House: Pushing boundaries in spatial audio recording.

SIT: Zylia's 3D audio technology complements the SIT studio to record and broadcast with immersive sound live artistic events

Immersive Media Lab, Leibniz Universität Hannover: Remote presence in virtual sound scene reproduction provided by spherical microphone arrays

Ellephant Gallery, Montreal: 3D audio documentation with ZYLIA 6DoF Volumetric audio set

Sherbrooke, Quebec, Canada: Centre de Recherche acoustique-signal-humain CRASH using multiple microphone arrays to measure speaker radiation characteristics

McGill University: Multiple HOA recordings of the Papadimitriou trio at Pollack Hall McGill

Orford Musique: Recording of the project QUATRE sur le chemin at Center Musique Orford

SUBSCRIBE TO ZYLIA NEWSLETTER!

Sign up for a free newsletter. Stay informed about audio field content, new products, software updates and promotions.